What follows is less of a formal tool or interpreter, and more of an experimental wrapper application. It was mostly built as part of an experiment.

I originally got interested in IntFiction, because I am an avid TTRPG player, but found no computer games or RPGs quite scratched that itch. Much of what I have been doing in terms of Narrative Design is trying to emulate parts of the experience of Tabletop Roleplaying. No surprise then, that the rapid development of LLMs in recent years gave me ideas.

I have experimented a bunch, but they’ve always been disappointing. LLMs make for terrible Game Masters. They don’t keep a consistent world state, nor do they write particuliary inspiring prose, and unless you prompt it very directly to be at least a little antagonistic, it will be too scared to actually offer any challenge.

But, reading through this forum extensively and interacting with the community about parsers, I realised that languages like TADS 3 and Dialog already do a really good job of maintaining the illusion of a world. The only situations it needs to excuse itself is when neither the author, nor the developer of the standard library have accounted for specific user input. I noted elsewhere that this is a great place for an LLM or dedicated, custom-trained AI to have a little domain carved out for itself.

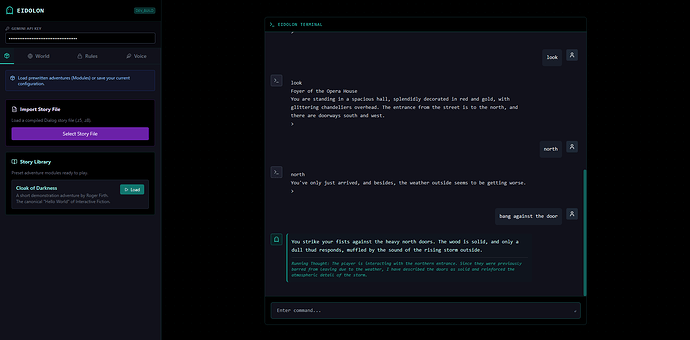

So, over the past week I have been building, and would like to present Eidolon, a Dialog interpreter (or really, a Z-machine wrapper) with a Ghost in the Machine to help handle fallback cases. I’ve been testing it a bunch, and it works surprisingly well!

So, why Dialog? Specifically because the language relies on predicate logic to determine what is and isn’t possible, which makes it very easy to hook an LLM into this. Instead of needing to pre-program a number of commands, you merely create a number of hooks for the LLM to help update the world state in case the player action demands it.

To that end, any games to be used with this, do need to include the Eidolon library.

%% Eidolon Library

%% ===========================================================================

%% Eidolon Layer

%% ===========================================================================

(dynamic (object $ has narrative $))

%% Injection Logic used by the LLM to change the world state

(grammar [eidolon_note [object] [any]] for [eidolon_note $ $])

(perform [eidolon_note $Obj $Words])

*(object $Obj)

(now) ($Obj has narrative $Words)

[ACK_INJECTION] (line)

(grammar [eidolon_do [any]] for [eidolon_force $])

(perform [eidolon_force $Words])

(try $Words)

[ACK_INJECTION] (line)

%% For ANY input, offer eidolon_fallback as a possible action.

(understand $Words as [eidolon_fallback])

($Words = [$ | $]) %% only match non-empty word lists

%% Make the fallback action extremely unlikely.

(very unlikely [eidolon_fallback])

%% Explicitly allow this action, bypassing any refusal or prevention rules.

(allow [eidolon_fallback])

(true)

(perform [eidolon_fallback])

(line)

\[EIDOLON_FALLBACK\]

(dump-eidolon-state)

\[\/EIDOLON_FALLBACK\] (line)

(stop)

%% Sidebar updates after actions

(after [any $Action])

($Action = $Action)

\[EIDOLON_STATE\]

(dump-eidolon-state)

\[\/EIDOLON_STATE\] (line)

%% ===================================================================

%% JSON State Exporter

%% ===================================================================

(dump-eidolon-state)

\{\" (no space)

(current player $P)

(if) ($P has parent $Loc) (then)

location\":\ \" (no space)

(name $Loc) (no space)

\"\,\ \" (no space)

(endif)

visible\":\ \[ (no space)

(exhaust) (dump-visible-json)

null (no space)

\]\,\ \" (no space)

inventory\":\ \[ (no space)

(exhaust) (dump-inventory-json)

null (no space)

\]\,\ \" (no space)

narrative\":\ \{ (no space)

(exhaust) (dump-narrative-json)

\"_ignore\" (no space)

\:\ null (no space)

\} (no space)

\} (no space)

(dump-visible-json)

(current player $P)

($P has parent $Loc)

*(everything $Obj)

($Obj is in room $Loc)

($Obj \= $P)

\" (no space)

(name $Obj) (no space)

\"\, (no space)

(dump-inventory-json)

(current player $P)

*(everything $Item)

($Item is in room $P)

\" (no space)

(name $Item) (no space)

\"\, (no space)

(dump-narrative-json)

*(object $Obj has narrative $Fact)

\" (no space)

(name $Obj) (no space)

\" (no space)

\:\ \" (no space)

(print words $Fact) (no space)

\"\, (no space)

What I have is far from complete, not really intended as a serious replacement interpreter, but it’s mostly a proof of concept I’m playing around with. It basically runs a Z-machine in the background, and then intercepts any relevant logic in case the eidolon-fallback logic is triggered.

I’m also writing a dungeon crawler in Dialog, which I do intend to hook the Eidolon library into. I have to admit, I am having a lot of fun with this.

[!note]Disclaimer

Please don’t take this as me saying AI is the best thing ever and we should upgrade our interpreters to all support it. This is purely a proof of concept I’m playing around with personally. I have burned my hands enough on the subject. But I am incredibly giddy the proof of concept worked, and I got to learn Dialog in the process.

Either way, I should probably move to using the Å-machine, but I wanted to get the logic functional first, and starting out with this project I did not really understand the difference yet. I also need to make the LLM better at inserting predicates. I have tried doing questionable things to my pants in some test-runs, but if no pants are defined, there is nothing to stick predicate logic to. As such, most logic currently sits under a generic “narrative” property: (dynamic (object $ has narrative $))

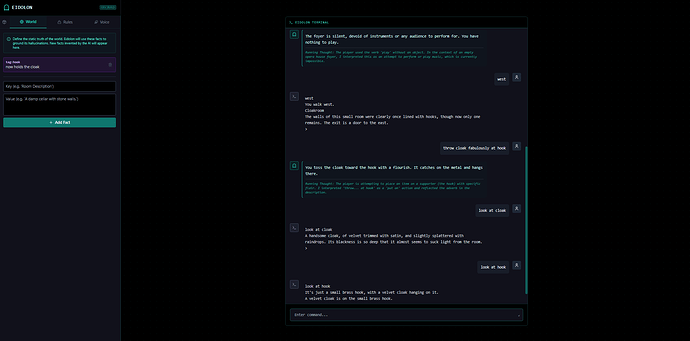

This is mostly container logic so the LLM understands what to do, but does not affect the world state so much. That is handled by the LLM interpreting what my command is probably meant to do, and then just does it, but with a flavorful description. See the screenshot below which interprets tossing the cloak with a flourish as PUT CLOAK ON HOOK.

Either way, just wanted to share this. For anyone curious, here is the current repository.

In case anyone wants to try it live, I uploaded a demo build to the same server that hosts ChronicleHub, but I prepended the domain name.

It does require you to use your own Gemini API key, but if you send me a PM, I am willing to lend a key so you can experiment on my dime. I’m not a rich person, but the API calls are relatively cheap. Still, as this is mostly experimental, I prefer to keep a little control on that for the moment until I have something like a local Ollama instance running to handle the API calls.