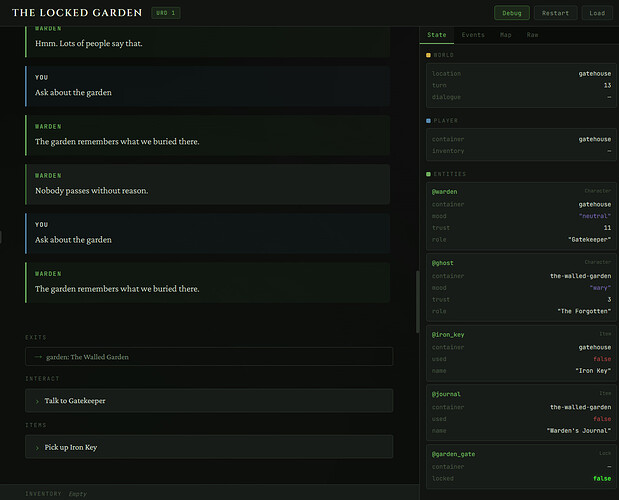

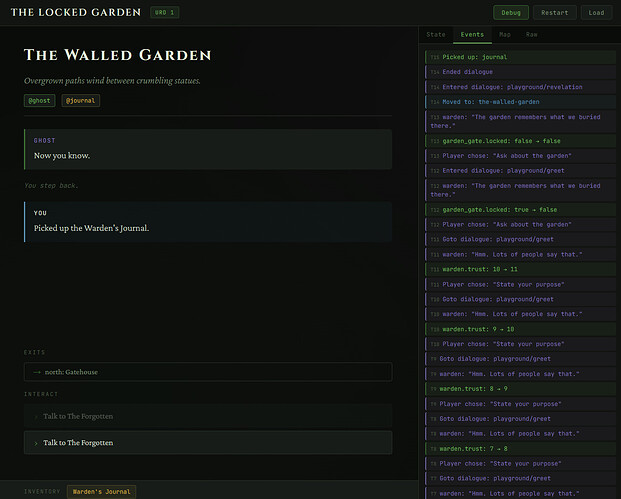

You know the halting problem all too well and the advantages of a declarative system, but that is essentially the answer to your question. Monte carlo simulation, dead end detection, reachability analysis.

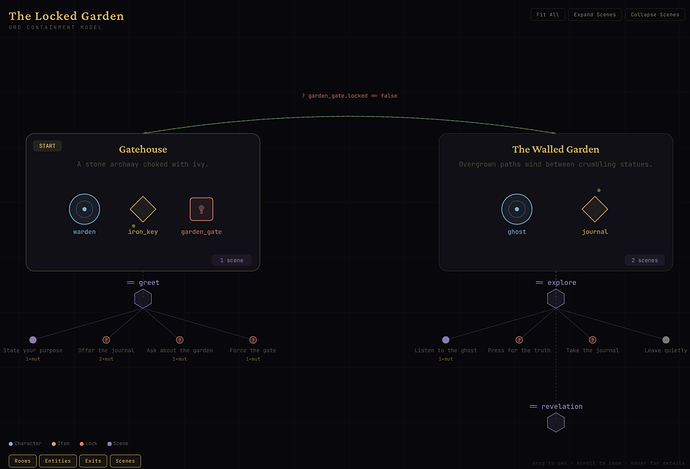

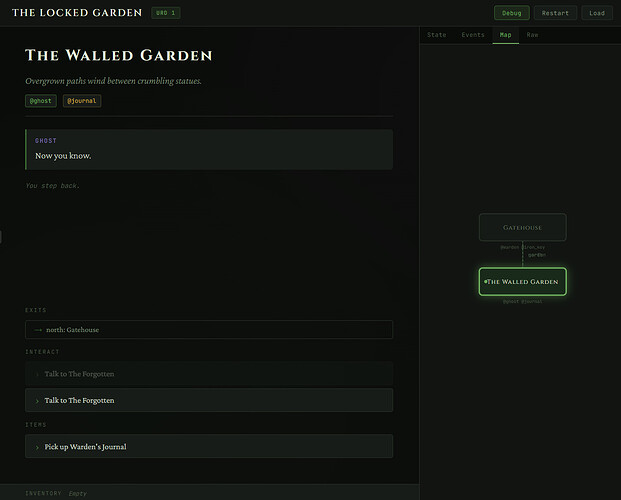

I love anything with a node based user interface, so being able to build such kind of tools on top would excite me a lot. With a descriptive schema, i hope to be able to take a break and work with some nice looking UI stuff.

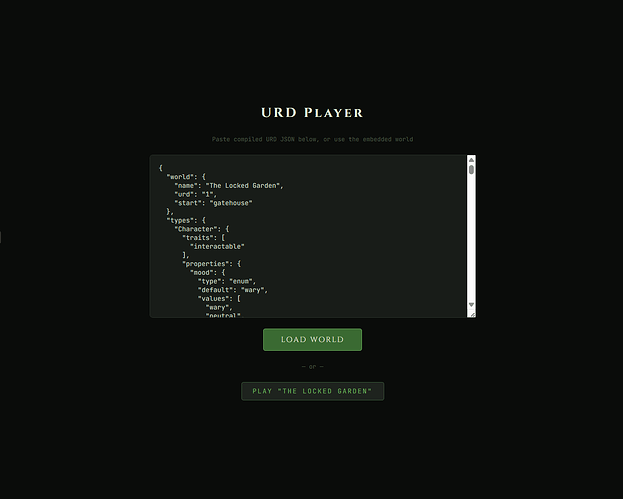

Will I succeed, honestly no idea. But Urd give me a nice playground to work with, as it sits at the intersection of technology and art. That’s the place I always lived in.

Will it degenerate into halting problem issues because of lambda extensions, for sure. I have not spent too much time thinking about where the lines exactly are going to be drawn. But I am hopeful that AI will unlock something that is more than: it’s a faster code typewriter. I think that an AI is uniquely positioned to reason about a world without having to execute it, and thus enabling something that a programming language could not.