Hi all, new here. Wanted to introduce myself and a project I’ve been working on, partly because this seems like the community most likely to tell me where I’m wrong.

I’m building Urd, a declarative schema language for interactive worlds. The short version: you author .urd.md files (Markdown with YAML frontmatter and a set of sigils for dialogue, choices, conditions, effects), and a compiler produces JSON that any runtime can consume. The scope is deliberately broad. Spatial models, typed entities, containment, state mutation, and narrative flow in a single format. Think of it as trying to unify what Inform does for world modeling with what ink does for portable narrative, in a format that’s authored in a text editor and consumed by anything.

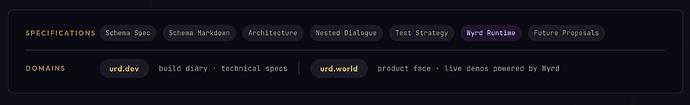

The spec covers a parser, linker, compiler pipeline, a formal grammar, a JSON output schema, and a reference runtime (Wyrd). For a single person, I genuinely can’t think of a more complex thing to attempt short of writing an operating system.

Here’s the part that might raise eyebrows: I’m building this almost entirely with AI assistance. Not “I asked ChatGPT to write me a compiler,” but more like a structured pipeline where AI agents draft specs, other agents review them, findings get consolidated, contradictions get surfaced, and I make the architectural decisions. It’s an experiment in how far you can push spec-driven development when AI is doing the heavy lifting on volume and you’re doing the heavy lifting on judgment. A giant iterative sausage making machine of AIs reviewing each other’s work, basically.

The process itself is almost more interesting to me than the product. This project lets me explore a question I find genuinely fascinating: can a single person with AI leverage actually architect and deliver something at this complexity level, if they’re disciplined about specifications and validation?

So here’s what I’m looking for from this community:

-

The thesis. Urd’s core claim is that no existing format unifies spatial simulation, typed world state, and portable narrative in a single engine-agnostic schema. Inform 7 comes closest but is coupled to its runtime. ink is portable but dialogue-only. Twine/Twee handles branching but has no world model. Is this gap real, or am I missing something?

-

The approach. Spec-first, then formal grammar, then compiler, then runtime. The test corpus is split into positive cases (must parse) and negative cases (must fail with correct errors). Each bug becomes a new test. Does this track with how compiler projects in this space have been bootstrapped, or are there lessons I should learn before I learn them the hard way?

-

The AI elephant in the room. I know “AI-generated” triggers a justified skepticism reflex. I’d ask that you evaluate the specs on their merits rather than their origin. That said, if the specs read like slop, I genuinely want to know. That’s exactly the kind of AI-induced psychosis check I need. The whole point of posting here is to get feedback from people who’ve spent decades thinking about interactive worlds, not to market a product.

The project is at https://urd.dev if you want to look at the actual specifications before forming an opinion. Everything is public.

Happy to answer questions about the architecture, the AI workflow, or the design decisions. And if the consensus is “you’re reinventing something with extra steps and no benefits”, I’d rather hear it now.