I hope the IFDB guys are reading this ![]()

After a break I was going to make the 2023 edition of 2020 Alternative Top 100 which got a lot of positive response. But I can’t now because I cannot sort by average rating anymore. Alternative solutions are welcome!

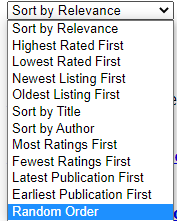

I am not in a hurry but I really hope that the people behind IFDB will make the average rating an optional sorting algorithm again in the near future. Sometimes simple is beautiful. You could simply call it “Sort by Average Rating” and place it near the bottom (see picture). More discussion at the bottom of this post.

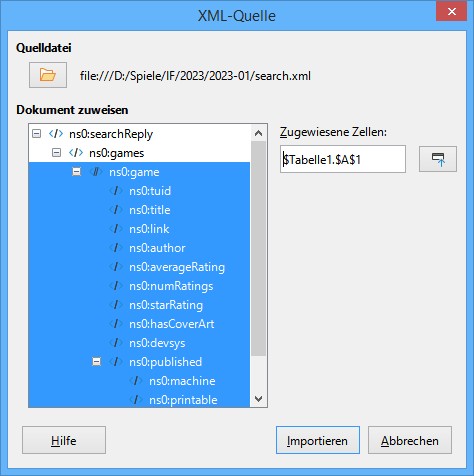

Until this becomes available(?), I might be able to utilize the data sets which were uploaded to the IF Archive but they are sql-files, huge and I don’t know how to get the relevant data into OpenOffice Calc.

A good advice would be very much appreciated. Thanks!

DISCUSSION OF SORTING ALGORITHMS

The simple “average rating” was replaced with a relatively simple statistical method in 2021 as described here: !New Features on IFDB

It might not be a bad idea because some games have only a single rating and might be rated by someone biased etc. However, there is no proof that this method is always better than the simple average. Sometimes that one 5 star rating was made in good faith whereas a game with 5 ratings sometimes has 3 suspicious ratings.

Example:

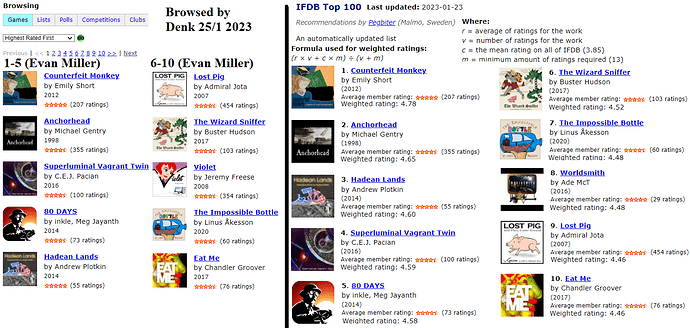

You can see that 80 DAYS is ranked higher, even though its average rating (see the graphical stars) is lower. Is 80 DAYS a better game, just because it got 73 ratings instead of 55?

I am just saying that “complicated” statistical analysis isn’t alway more correct than simple approaches. The optimal method depends very much on the dataset in question. So I think sorting by simple average rating should at least be optional.

If you want more accurate ranking, I think you should start with removing all ratings given by the game’s author, i.e. when the game is linked to their own profile. For instance, that would probably give a less biased ranking of Cragne Manor ![]() [I was an author too but it is hard - very hard - to be completely unbiased in this case]

[I was an author too but it is hard - very hard - to be completely unbiased in this case]

EDIT: About Cragne Manor, apparently only four of the 19 ratings were given by a Cragne Manor author and one of them excluded his rating from the average so it seems that unbiased players also think that Cragne Manor is a great game!