It’s not like “throwing more money at the problem” didn’t occur to me. But that requires money in the first place ![]()

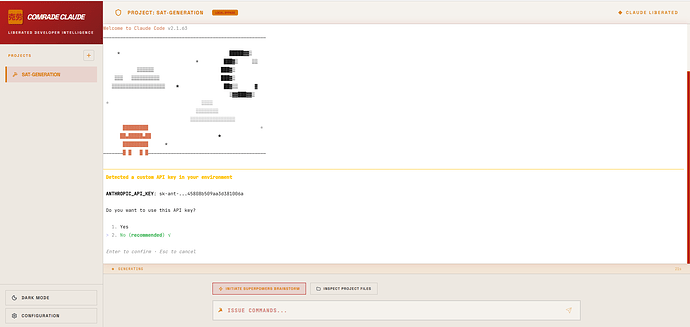

Anyway, I just decided to get creative, and instead get Claude to run on a DeepSeek API key, but I had a little too much fun with building a UI before confirming it actually worked.

Really appreciate this writeup, thanks! The spec-driven cycle hits home with how I’ve been working, and the “almost good enough” warning is something I wish I’d read six months ago (I over-engineer to a fault).

I’m building a choice-based vampire text game (browser-based, not parser) and I use a similar spec-first approach for structure. World docs, character states, arc maps, narrative voice guides. Claude implements within those constraints. That part matches your methodology pretty closely.

Where I differ from your take is the prose boundary. I let AI into the writing, but as a collaborator, not a generator. I come in with the ideas, the emotional beats, the world logic, the voice I want. I work with Claude to draft it, then edit by hand. It’s closer to working with a co-writer who has no taste than to handing off the writing.

What made this survivable was building a detection process. I keep a running list of structural patterns that read as AI-generated. Not vocabulary problems. Assembly problems. Relentless polish. Perfectly balanced parallel constructions. Every paragraph ending on a crafted line. Zero throwaway sentences. When real players flagged specific patterns in my game, those went into the checklist too. Then I go through and deliberately break some of them. A few rough edges make the rest feel authored, compared with the “too much polish” when I use Claude as a collaborator, so I go through multiple hand-edits.

Your boundary is the safer one. Mine requires constant vigilance and a willingness to hear “this felt like AI” from testers and actually do something about it. The risk you identified, editing AI prose instead of writing your own and watching the voice drift toward generic, is real. I’ve had to fight it.

Curious whether anyone else is working in that middle space, or if the community consensus is that the line should stay where you drew it?

The biggest events in this community now allow LLM-generated code but forbid LLM-generated text, so I think the consensus on this forum specifically will probably be biased toward David’s interpretation.

@robserpa I’ve used AI a bit for coding solutions, but I just can’t use it as a vehicle to artistically express … me.

I can understand the mad scientist angle though. I just hope that your final creation is what you envision. Most readers gravitate towards certain authors because they have a style about them that’s uniquely satisfying. Are you training the AI with your edits to write more in your authoring style?

All fair points, absolutely. And yes, I’m training it in my style, in addition making sure the passes are collaborative.

This is a fascinating discussion - I thought I’d throw in my two cents, since I was an early adopter with AI tools going back almost 3 years after a shoulder injury reduced my ability to type to one arm. It was well-timed, actually. It let me keep my software engineering job during a grueling 1+ year recovery.

LLMs have gotten better at coding, this is true, but the improvements are over-hyped and not exponential. The past year especially, the actual models themselves are improving at an increasingly slow pace. Companies like Anthropic and OpenAI are dumping massive amounts of compute into training new models and every new model is only slightly better than the last.

What is improving dramatically is all the tooling around the LLMs themselves. For instance, in my current workflow, the main LLM will spin-off multiple parallel agents to investigate the codebase, come up with plans, double check its own work, etc. This is because the creators of agentic coding tooling (Claude Code, Codex, OpenCode) understand that LLMs will always hallucinate, make mistakes, and fail to follow instructions. so getting them to double check their own work and gather context from different view points, do web searches to fact-check their hallucinations, etc. is the best way to minimize it. Still, even with all of this, LLMs are still currently incapable of producing bug-free code, and this is true even in small codebases.

I suspect that there’s only so far the clever tool use can take us to get around the flaws inherent to what an LLM is, and once we max that out, we might stall. Maybe in a year or two, until some other non-LLM AI tooling comes along. This goes against what a lot of the hype coming out of AI companies and Silicon Valley says, but you have to remember, the people hyping AI and predicting it’s going to replace everyone’s job are also the people trying to sell you AI.

If you want to make a nice landing page, you can do that in a few hours. If you want to build a database that isn’t going to become a massive quagmire for your project once it scales, you need to actually know what you’re doing. AI tools have made me more efficient at some parts of my job. They haven’t replaced me.

The biggest problem with all the “vibe-coding” is that if you don’t have an experienced engineer telling the LLM what to do, how to build things, giving it specific architectural instructions, it’s just going to build spaghetti code. LLMs are pattern recognition and regurgitation machines. If your codebase is new, or has poor patterns, you’re going to end up with unmaintainable code that doesn’t scale, and becomes increasingly buggy. Even if you have good patterns, the LLM will simply fail to follow them a good portion of the time, and if you’re not reviewing the code, you’ll end up committing anti-patterns.

After a few months, you’ll realize, “Oh, I actually need to learn how systems work, not just tell the AI what I want the end result to look like”. The LLMs are trained to put out the minimum effort to achieve a specific result, and so therefore save tokens and cost. They will constantly take shortcuts.

Regarding writing, LLMs are pathologically incapable of writing good interactive fiction. I’ve tried - mostly because I wanted specific story plots created for me to play (as a reader) with unpredictable outcomes. The result was… poor. The plot had little to no continuity, outcomes were not surprising, there was no creativity or originality, or “spark” that lets people produce good writing. Even with a lot of scaffolding and constraints built around them, they still failed to produce anything interesting to read.

Once you understand that LLMs are pattern machines, it makes sense that they’re good at coding. You train the LLM on billions of lines of code and they’ll spot the patterns and repeat them. That doesn’t really work for fiction.

I strongly believe that software engineering jobs are going to go through a major change over the next few years. It already started a couple of years ago. My boss has been encouraging me to use AI tools from day 1, because my productivity would go up. He’s not wrong.

But that doesn’t mean software engineers are going to go away as a type of job, they’re just going to spend their time managing AI agents, designing systems, and checking their work, instead of writing code. Junior engineers might have to learn more advanced skills to stay on top of things. Vibe coding will likely never be a thing at serious companies producing software used by real people.

Then again, who knows, I could be wrong ![]() But this is what I suspect is the most likely outcome from my personal experience and researching this.

But this is what I suspect is the most likely outcome from my personal experience and researching this.

I want to push back a bit on the “pattern machines can’t do fiction” thing.

Back to the Future is a pattern. Armageddon (the movie) is a pattern. Nobody complains because it’s executed with enough heart that you don’t care. Netflix figured this out at industrial scale. They’re not stumbling into hits. They know what works structurally and they pour human creativity into that structure. People love it.

LLMs are actually really good at this. Give one a well-known story framework and ask it to help you scaffold around it. What is probably missing for IF is the level of academic work and minute dissesction performed on movie scipts.

Where LLM’s fall over is the human bit. The voice, the surprise, the weird details that make someone care about a character. They all play it too safe, no argument there. But that’s the job. You bring the madness, the LLM brings the scaffolding.

Sometimes cookie-cutter structure is exactly what people want. I wouldn’t even know where to start to look for what works and does not work for IF. I am more interested in the underlying technology than the narrative experience. However, I am sure that there is a pattern here.

Edit: There obviously still is an art to it, just look at Disney :D. But honestly, if you look at what Netflix is spitting out is maddeningly good. Has anybody seen the new series The Recruit?

It is extremely common for people to complain that the modern media landscape feels increasingly bland and formulaic due to corporations insisting on sticking to tried-and-true patterns, rather than taking risks on novel art. The idea that “cookie-cutter art is great, just look at how good the content on Netflix is” seems unlikely to persuade people who see AI-generated art as the extreme conclusion of an industrialization of art that they were already critical of.

@Storyfall My understanding is that AI will produce the most probable responses from the data available to it. In a way, it will only produce the most homogeneous consensus of humanity’s writing. So what you’re saying is that by having a group of AIs (parallel agents) convene about what the response should be, hopefully they reply with something less popular (more original)… but, doesn’t less popular mean less associated data to draw from and even a greater chance to go off the rails? Am I thinking about this correctly? It sounds like a slippery slope.

@Urd There are patterns that are definitely tried and true (like the hero’s journey), but the pattern isn’t the part we praise a work about… it’s the “heart” part that you mention.

Yes “cookie-cutter structures” can be desirable, but only because we recognize layers to a story… and sometimes we want to focus on the other aspects. Predictability of plot provides less friction to appreciate the action star being a bad ass or the villain getting their comeuppance. But even those aspects require nuance that I don’t think you are appreciating. Even dumb shit can resonate with us… but it’s not because it’s dumb.

Something to ponder: There’s also the unspoken aspect of humanity that never gets written down… ever. AI only knows us by what we are willing to share in our published words within our peer groups. At least our physical experience with each other gives us insight into reading between the lines, but an AI can never know that aspect of us.

Not that it’s relevant to anything specific here, but I’m reminded of a short story by Isaac Asimov called Cal. The plot sort of goes as follows:

A prominent writer realizes his robot wants to write, just like he does. So he entertains the idea and gets a technician to give the robot more intelligence for grammar, spelling and general writing skills. The robot starts from humble beginnings, but eventually surpasses the ability of his master. (We, the reader, get to witness the progression of the robot’s stories as short stories within this short story.) The writer has no desire to play second fiddle so he explains the situation to the technician and demands the robot be stripped of its writing ability (against the technician’s protest). The robot overhears this and concocts a plan to murder its master and offer its services to the technician (making him extremely wealthy) in trade for the technician’s silence and allowing the robot to serve its new found purpose… to be a writer.

I think Asimov touched in this more than once. In the short story Galley Slave, a robot is employed as a proofreader by an author who subsequently sues the manufacturers after the robot introduces errors into the work. It turns out that he ordered the robot to do this because he didn’t want human academics to be replaced by robot authors. Susan Calvin, who is generally presented as quite pro-robot, is described as being unable to fully suppress the sympathy she feels for him even as it becomes clear that he has ruined his own livelihood.

I’ve not seen it, but adding it to my list for this weekend!

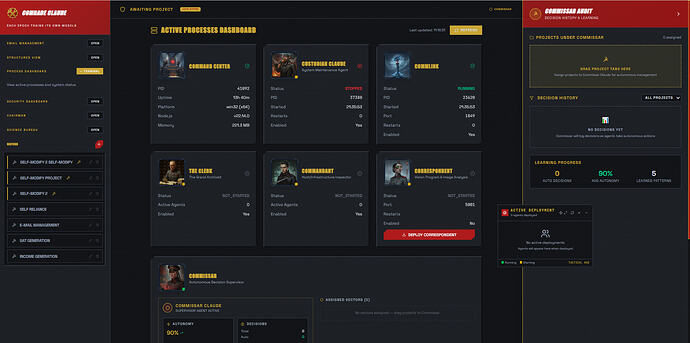

In the meantime, as we have now well drifted from the main topic (should we change the topic name?) I did want to share how I went a little overboard on my side project.

I gave each agent portraits, and voice lines, and it’s all starting to get integrated so much so that it’s basically a little agentic RTS by this point.

I even put in cycling taglines in the top left.

I still wouldn’t let it write a novel, but I do like having a Command & Conquer style dashboard to work with. 9-year old me would be very giddy to hear current me is building this as a side-project.

However, I think I won’t be getting any jobs in software development soon, despite knowing architecture and programming by hand. The future for entering that career path does not seem hopeful.