I’ve finally got reading and writing in text mode working. Now I’m trying to implement the binary streams.

These are supposed to read and write 4 byte integers. But if I do this I’m breaking the text mode tests again.

Is there a trick to this or is the Glk spec wrong? I’m happy to say, that WinGlulxe fails the binary tests, too. So it’s not just me  .

.

Exactly! Zag now passes all tests in externalfile.ulx. But most of the tests in extbinaryfile.ulx fail. When I try to fix the binary tests, the tests in externalfile.ulx fail. WinGlulxe doesn’t pass the binary tests either.

The two tests aren’t contradictory, obviously. I can’t tell what you’re doing wrong just from that.

WinGlulxe probably hasn’t been updated to include the UTF-8 case.

Not yet - I was waiting for you to release a new Glk specification with the UTF-8 requirement in it.

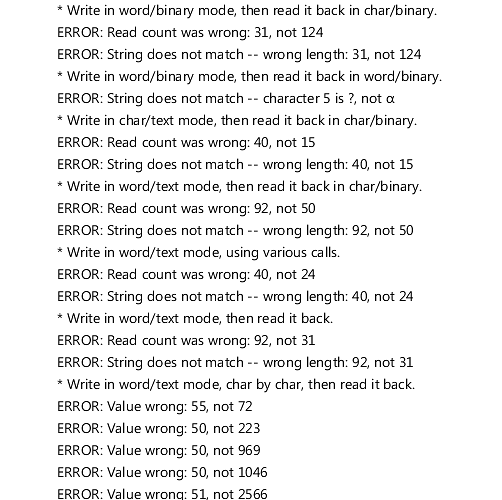

I have attached a screenshot of the output of the extbinary tests. The biggest problem is, that I’m reading and writing binary streams as single bytes, because that works with the externalfile tests. (UTF-8 is working quite well, too.) Right now I’m not sure how to accommodate both sets of tests.

Mixing ASCII, Unicode and Binary formats together like that is a pain. Usually one chooses one format and sticks to it.

I’m sorry, I let this thread slide.

The writing format should be completely determined by the combination of two flags: text/binary and char/unicode. The four outcomes are defined at github.com/erkyrath/glk-dev/wik … ec-changes .

The confusing part is that the text/binary flag is set when the fileref is created; it gets inherited by the stream when you call glk_stream_open_file or glk_stream_open_file_uni.

The char/unicode flag, obviously, is determined by whether you call glk_stream_open_file or glk_stream_open_file_uni. (Not by whether you call glk_put_char or glk_put_char_uni, etc. That just affects the argument type.)

Does that help?

Thanks for the explanation, but I figured most of this out on my own in the meantime.

I have still two problems left.

Writing in unicode/text mode I convert line breaks to system line breaks (as per the spec). On Windows this is $0D $0A. Reading this back in text mode I convert this back to $0A. But when reading in char/binary mode I don’t convert the line breaks. Otherwise it would be impossible to read this combination of bytes for whatever reason. Still the binary tests complain, that my read count is too long by two (52 instead of 50). I do not think this is right.

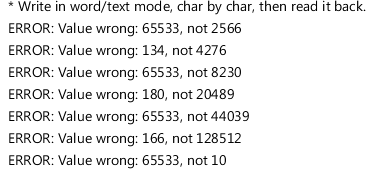

I’m unable to read the last test case (“Write in word/text mode, char by char, then read it back.”). I’m not even sure if I’m writing in correctly. I have attached a screenshot. Maybe you can make heads or tail out of it.

Also it would be nice, if the tests could be executed individually. This would make debugging much easier. I would do it myself, but I’m afraid my I6 knowledge is not up to the task.

Since this stuff hasn’t been implemented on Windows, it hasn’t been tested there. It’s possible that the test is wrong for that case. I’ll take a look at my test logic.